Battlehardening zenith

Next Steps for Zenith

Zenith is approaching ready for posting on a venue like Hacker News, where if it catches on, could generate massive server loads. I often brush off issues as “problems I’d love to have”. But this needs serious consideration.

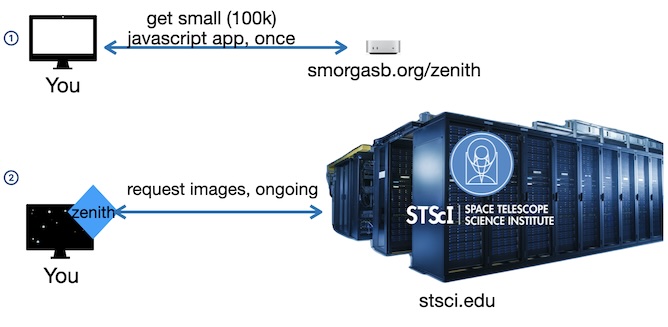

Zenith presents some unique challenges around load handling. To show each user’s actual zenith point, then the images can only come from Space Telescope Science Institute, they can’t be cached. Users as little as 5 miles North/ South of each other have completely different data.

To review, smorgasb.org is out of the picture after the initial access, then all the load is on stsci.edu.

If 1,000 users hit this website at once, we can dish out that little javascript app, no problem. but if all 1,000 of them simultaneously request images from STScI, then I’ve just launched a botnet attack on a scientific data archive.

Objective: Do not launch a botnet attack on data archive.

And not get cloudflare-banned again.

Precaution: announce myself:

- Our image request adds an extra “app” url param which the server ignores, but it will show up in their logs, so they they can recognize our app’s traffic, even when its coming directly from user machines. Our request URL looks like this:

https://ps1images.stsci.edu/cgi-bin/&......s&app=zenithtrack

If there should be excessive load, they can contact me directly and I’ll shut it down. I can’t see their traffic dashboard to know their current load.

My friendly contact there said “a few requests per second” would become a problem. The client requests a new image around every 25 seconds.

1 client running = 0.04req/sec

75 clients running = 3 requests / sec`

Q: Why only 3 requests/sec

A: STScI is rendering those tiles from raw data, based on specific parameters.

Q: How do we know how many clients are running?

A: We can’t. This server sees users only once when they first connect, we don’t know how long each client keeps running zenith, all the while requesting images. I have reservations about the app reporting back usage. Can I estimate? To be conservative/ pessimistic, estimate high, If I am pessimistic about holding peoples’ attention, estimate low?

When a critical number of clients run, and we need to divert them from hitting stsci, How can we stop them? (note, this is open source, anyone can run an instance).

A better-than-nothing approach

- monitor traffic to /zenith on this server

- if hits > threshold, throw “kill switch”

- kill switch changes program behavior to no longer contact stsci.edu, but download a single cache of tiles I’ve prepared, for the latitude 51.3°, the skies over Stonehenge.

Killswitch formula be something like:

Number of clients running = # recent requests X estimated session duration

The Tile Cache

The tiles in the tile cache can be pre-image-processed reducing client load.

Storage:

Tiles are 10 arcminutes wide = 1/6 degree

360° ÷ 1/6 = 2,160 tiles

To create the cache:

- download a ribbon of tiles ✅ done

- do image processing ✅ done

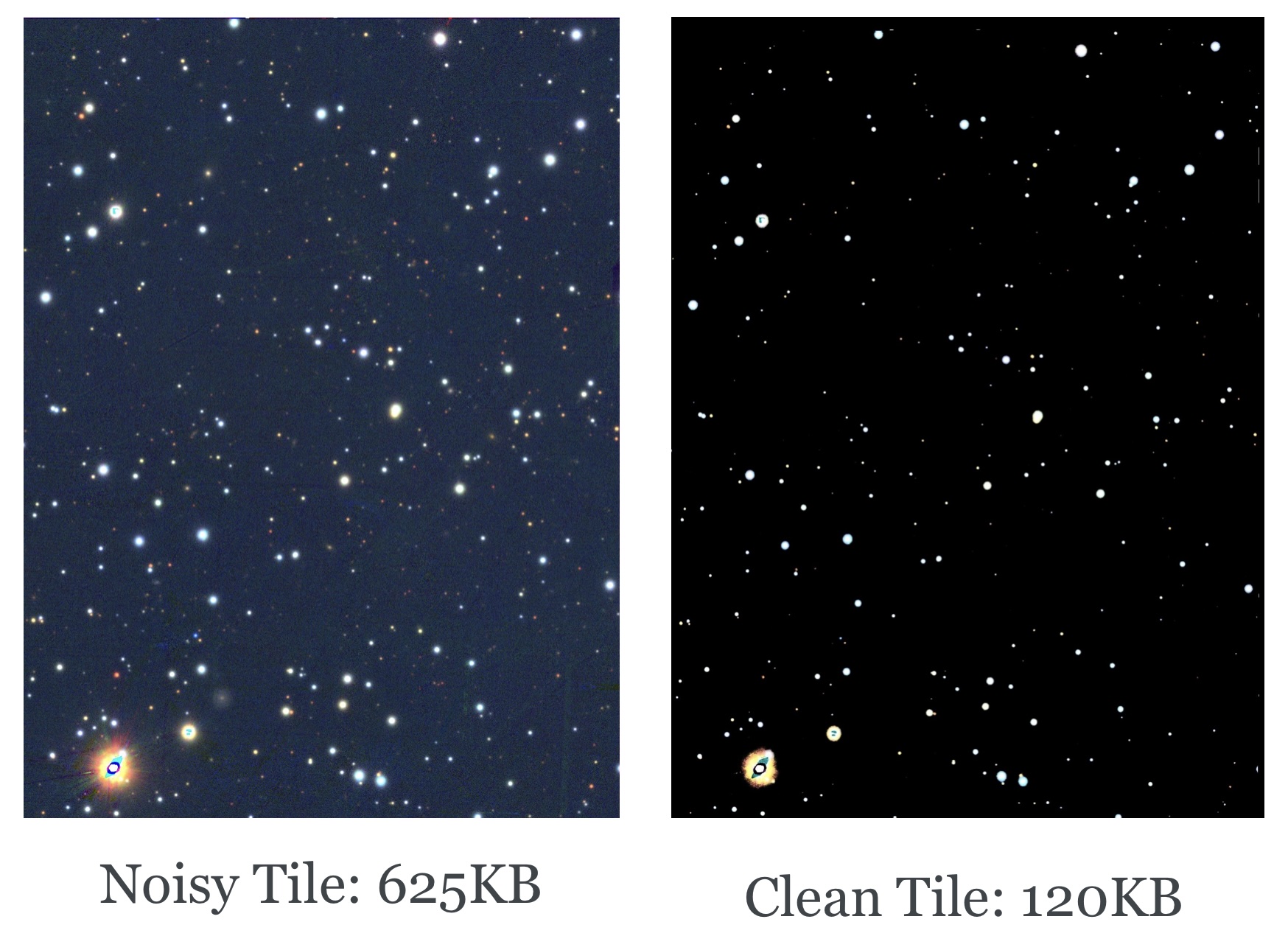

A benefit of preprocessing the tiles. I didn’t appreciate how much smaller they’d get. The dimensions don’t shrink, but the file gets significantly smaller because the jpg compression algorithm is tortured by all the noise in the raw images. When the fuzzy pixels turn black, then jpg compression gets way more efficient.

End result:

Total Raw tile set size: 1.65GB

Total Processed: 500MB

Next: how many concurrent clients can we support, If serving tiles from our cache?

Q: What bandwidth is needed for a single client?

While each processed tile averages 230KB, the app fetches several on startup, so there is a surge. Over hours, the average usage of a client might come down to average ~25KB/s. But I need to account for that surge. especially if we get a couple hundred hits all at once.

smorgasb.org has a 1 GB fiber connection, and we should in theory support ~100Mb/s upload. Let’s allow 50Mb/s

I’m told a Hacker News Hug of Death can be something like “10,000 page hits over the day, most of that in the first 90 minutes”

That could be on the order of 100 users/minute, each requesting 4 tiles = ~1MB/user.

We seem to be right on the edge of bullet-proof.

But bullet-proof is not something to be “on the edge of”

I need a CDN.

To Do:

- Look at githubpages. probably give the tiles their own repo

- implement & test kill switch.

To be continued