Claude Code Investigation - Zoom for Zenith

Zoom out for Zenith

Zenith is my beautiful awe inspiring, inner-peace inducing, unique planetarium app that puts the viewer in a straightjacket, lying flat on the ground, tapes their eyelids open, and shoves a 180x telescope in their face, pointed straight up, with no adjustments possible. And then gaslights them that’s what they most want even though they never asked for it. And shames them for not experiencing sublime inner happiness.

FEEL INNER HAPPINESS - NOW!

User: Can I turn my head? Zenith: No!

Can I look at a different time? NO NO!

But Can’t we zoom out just a little? YOU AI SLOP LOVING FOOL. HOW CAN YOU NOT WANT THIS BEAUTIFUL PERFECT IMMUTABLE ZEN EXPERIENCE I LOVINGLY CRAFTED FOR YOU.

How about a - I HATE YOU DAD YOU RUIN EVERYTHING. IT’S NEVER LIKE THIS WHEN I STAY AT MOM AND SHAWN’S. <slam>

Zenith wants to show the earth turn, so it zooms way in on the sky. No other planetarium app does this specific thing.

Many other planetarium apps have those other control options. Why reinvent?

Do one thing well, say the design purists.

Do more things, say the users.

Do You Wanna Dance, asked the Beach Boys in 1965, in their first single, following Brian Wilson’s nervous breakdown the previous year.

I believed users would first look for a zoom & pan control and find the tagline -

“no control: We put the Zen in Zenith”

and be so overhelmed with inner peace and appreciation, they’d forget ever wanting anything.

There’s another dirty secret: pointing in a different direction, or playing from a different point in time wouldn’t change the view in any perceptible way. The star fields all look pretty much the same, magnified that much. I’ll swear to you that Zenith does show the date, time and location it claims, but if it didn’t, you couldnt tell.

So why consider user controls?

I admit it, a zoom out control would serve an important purpose, and that’s why I’m going to make claude build it, while i slam down my ninth free keurig.

We claim that the motion is real time due to the earth’s turning, and we demonstrate it, with math and charts, but the user to some degree, still takes it on faith.

If the screen allowed a wide view, where we see very little motion, and then zoomed in, to result in greater motion , then perhaps that could make the connection we need, to fully trust the app’s essential claim.

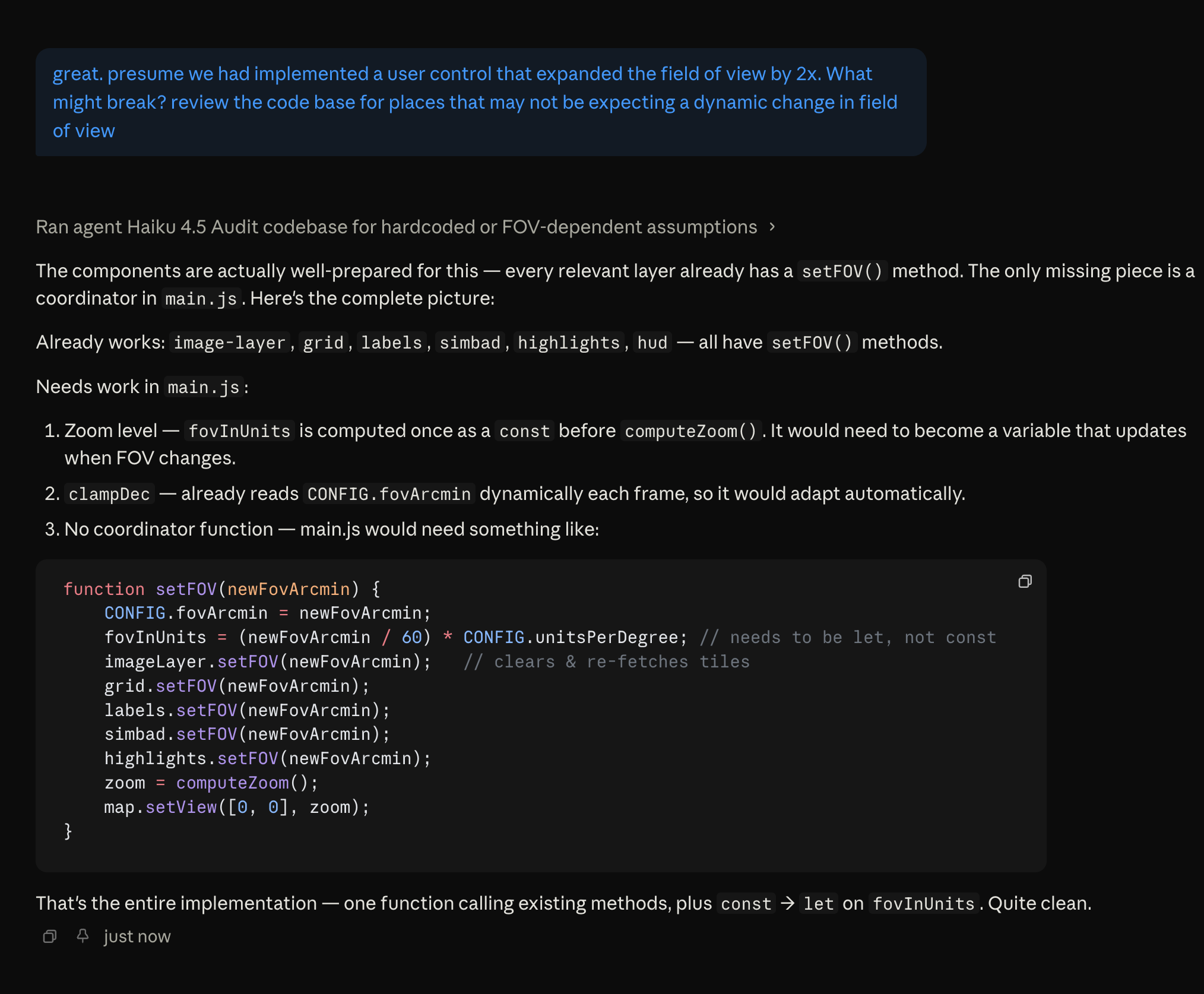

Technical implementation.

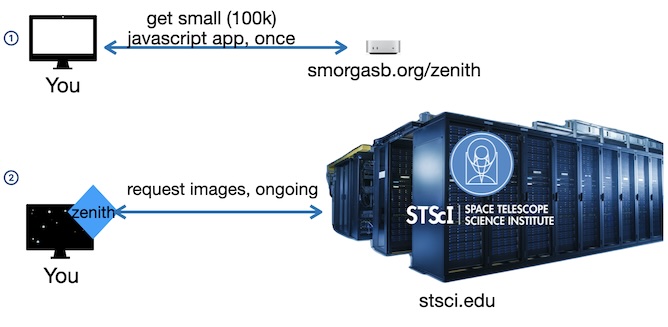

The jpg image tiles come as a row of images, each the height of the screen. Each image is requested directly from the STScI server by the client device. smorgasb.org simply supplies a small javascript app when you first access Zenith, and steps aside.

The image API specifies the celestial location and size of each tile, so zooming out should just be a matter of requesting tiles that span a larger patch of sky, at the same total pixel size.

There’s a Hitch

A little detail: Fetching an image tile actually takes 2 network requests from STScI. The image request requires some magic metadata along with the coordinates. Request #1 retrieves metadata, which is quite small. then Request #2 sends that metadata in a request for the actual image at that location.

So? request metadata for a larger tile

The hitch has a hitch … The problem is, to cut our network requests in half, and since the metadata is small, I have already cached the metadata for the entire sky - all 1.5 Million individual locations. The app is no longer set up to retrieve metadata as it runs.

Would you like to know how to get Cloudflare-Banned from the Space Telescope Science Institute? Which is serving public domain, free data?

Let’s just say it’s Not from NOT making 1.5 million metadata calls in a row.

On the plus side, it got me a quick intro to a senior astronomer at STScI who when I explained what I was doing, was extremely helpful.

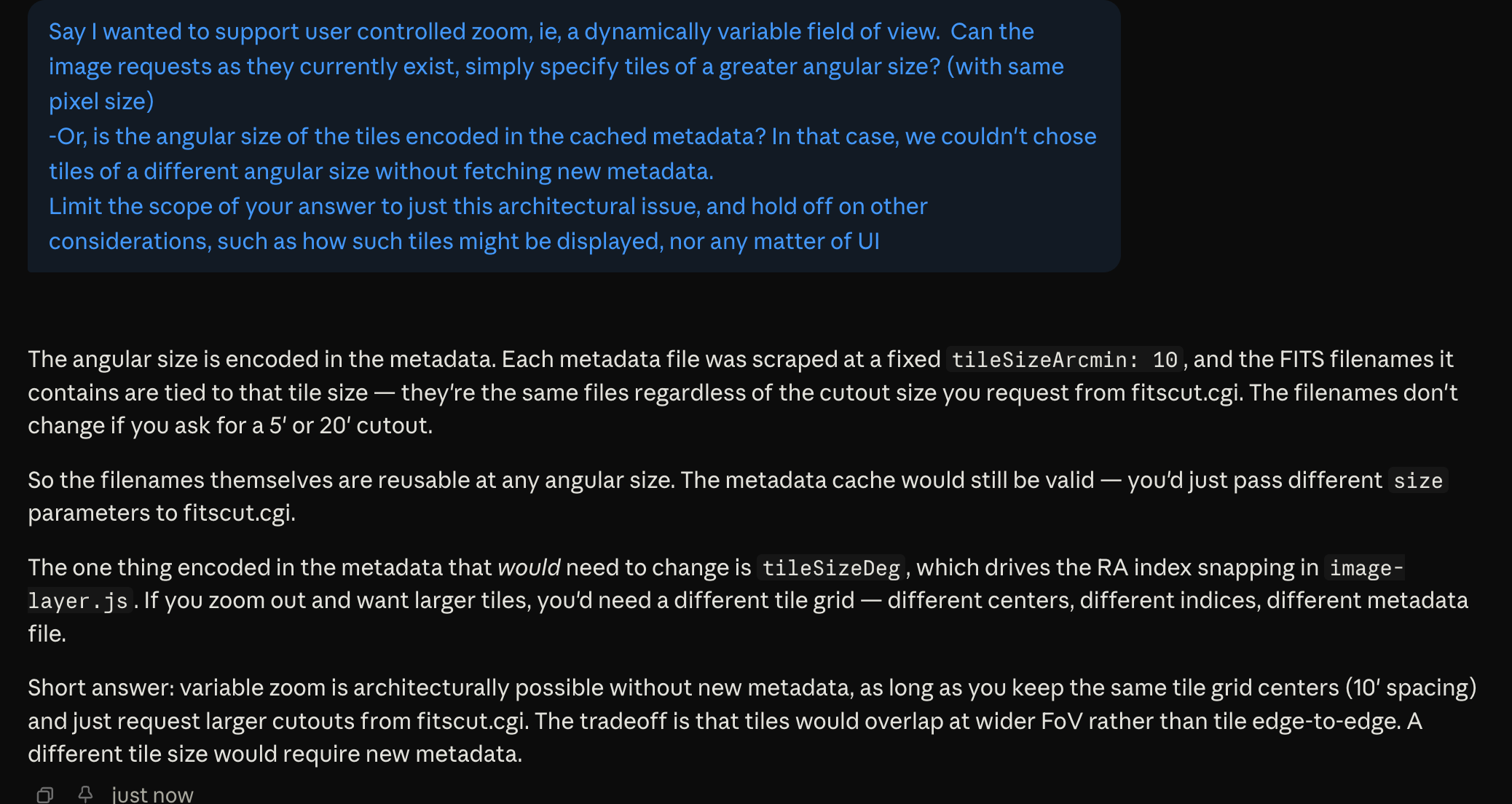

With an understanding of our metadata cache, we’re now ready to frame our questions around the design of variable field of view:

-

Can the existing cached metadata be used for requesting tiles of a larger angular size? (oh, please say yes😬) Or does it already encode for tiles of a specific angular size

-

If so, will the current architecture support a dynamically variable field of view, ie user controlled zoom level, by simply requesting those tiles of larger angular size, (and same pixel size)?

If we can get both of those to Yes, then we should be golden. Let’s fire up Claude Code.

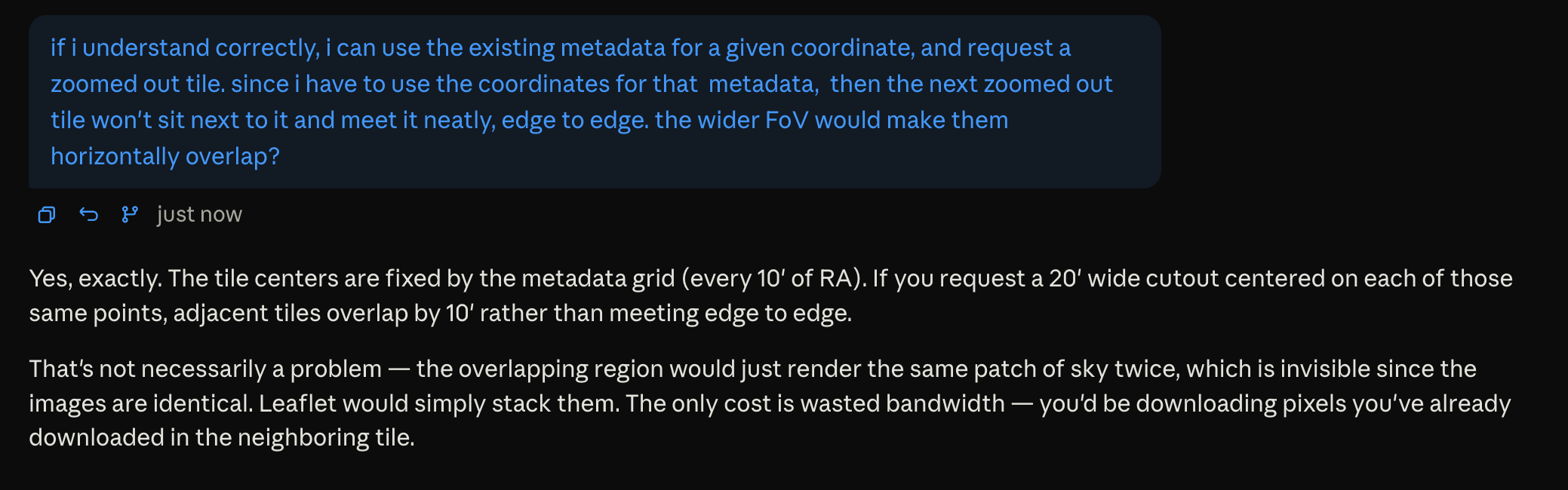

I get it. We can request larger tiles. but they’ll overlap slightly. That’s fine - we’d go from having tiles meeting edge to edge like this:

to overlapping like this:

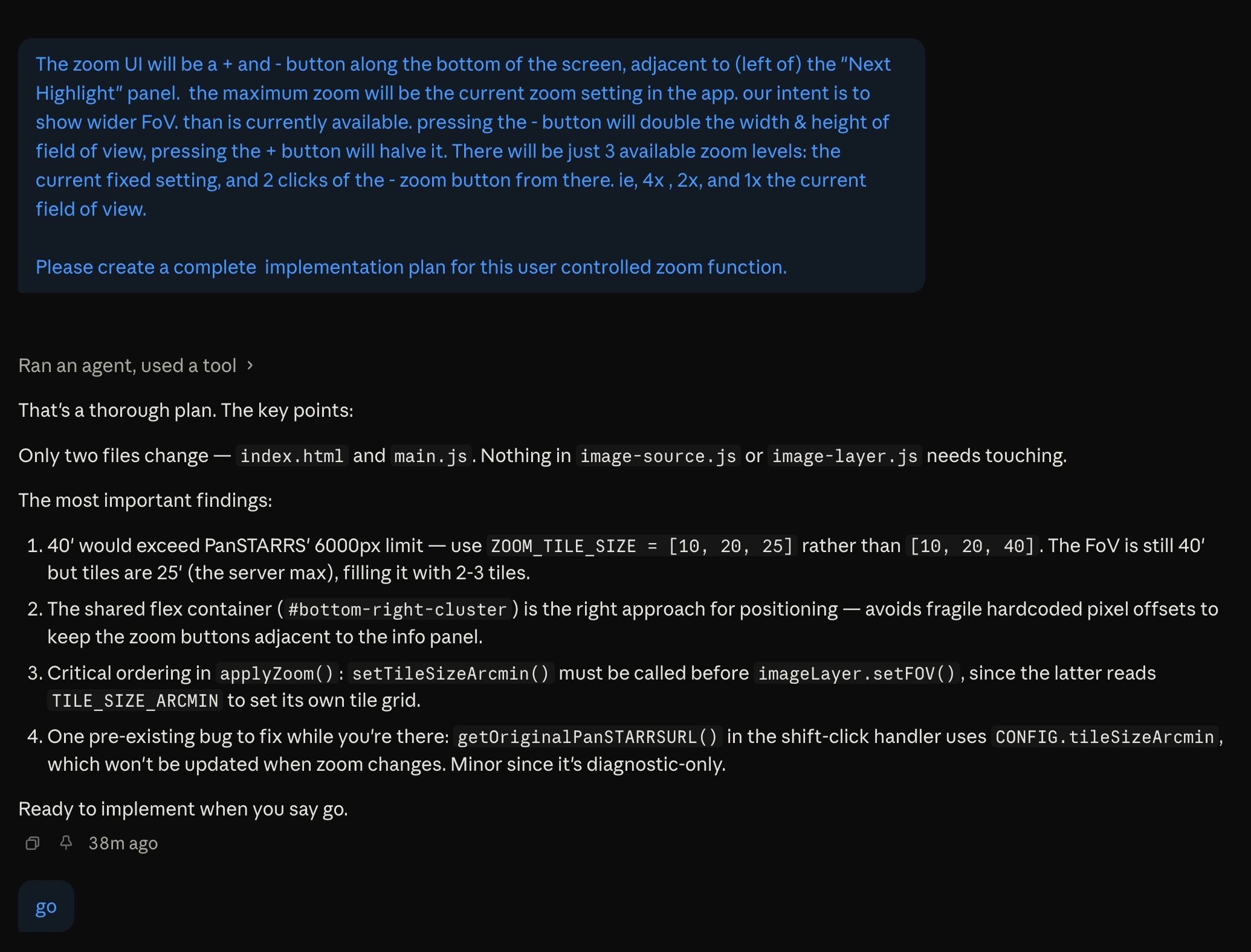

It wasn’t a one-shot, but Claude’s design largely worked. After a bit of geometry debugging, it became clear that even if this feature worked as designed, it wouldn’t accomplish my goal. So I’m dropping this pursuit.

The thematic goal was: use zooming in/out to bridge the view from non-moving to moving. But the most zoomed out view was only 4x the field of view - 4 rice grains long. So the movement was slower, but still unnaturally fast looking, so it didn’t connect intuitively with the naked eye view.

To get an even larger field of view would mean multiple rows of tiles, which is a much larger architectural change.

Secondly, what we could implement could hardly be called a dynamic zoom. it was more like ‘rebooting the app’ with different sized tiles, which take time to download.

and now i’ve been watching a visual field slide to the right for so long that when i look at stationary text, it feels like it’s sliding left.